The fuel supply chain is currently navigating its most turbulent period in modern history, facing a landscape where geopolitical instability and economic shifts can render a logistics plan obsolete in a matter of hours. As global energy corridors face unprecedented pressure, the industry is moving away from rigid, manual scheduling toward dynamic, continuous replanning. Rohit Laila brings decades of deep-domain expertise in logistics and supply chain innovation to this discussion, offering a roadmap for organizations looking to transform chaos into a competitive advantage. In this conversation, we explore the shift from traditional batch processing to real-time optimization, the critical role of “real-world” constraints in fuel delivery, and a methodology for deploying production-ready planning systems in just four weeks.

Global shipping corridors are seeing massive volatility, with insurance premiums recently jumping from 0.25% to 3%. How do these spikes impact freight economics, and what specific steps can planners take to prevent a localized maritime delay from cascading into a total network failure?

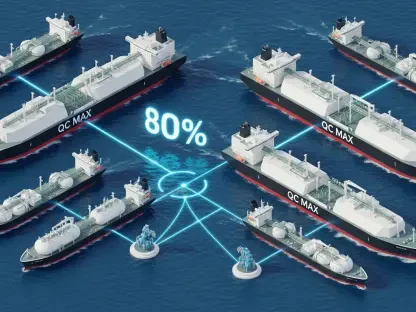

The jump in hull war-risk premiums from 0.25% to 3% isn’t just a balance sheet line item; it represents a tectonic shift that translates into millions of dollars in additional costs for every single vessel entering a high-risk zone. When you consider that roughly one-fifth of the world’s crude oil, refined products, and LNG passes through these specific corridors, you realize that a localized delay is never truly localized—it’s a ripple that quickly becomes a tidal wave. For a planner, the weight of this volatility is felt when a vessel delay shifts a berth timing, which then chokes terminal throughput and immediately starves inland depots of inventory. To prevent this cascade, planners must abandon the “hope-and-hold” strategy and move toward a model where they are constantly evaluating the “multiplier effect” of disruptions. This means having the digital infrastructure to instantly re-calculate routing and inventory positions the moment a signal of instability arrives, rather than waiting for a daily report. By the time a manual update is processed, the window to reroute or reallocate stock has often already slammed shut, leaving the network in a state of expensive, reactive firefighting.

Traditional planning models often rely on manual exception handling and batch processing. When factors like labor rules, port congestion, and inventory thresholds collide, why does this manual approach break down, and what are the primary trade-offs when transitioning to continuous replanning?

Manual planning breaks down because the human brain—and certainly a standard Excel spreadsheet—is simply not wired to process the sheer volume of variable combinations we see today. When you have port congestion delaying an arrival, while simultaneously hitting a strict labor compliance window for drivers and watching a depot drop toward its minimum inventory threshold, the math becomes overwhelming. In a manual environment, every handoff between teams is a point of failure where data can be misinterpreted or lost, leading to “guessed” scenarios rather than calculated ones. Transitioning to continuous replanning does require a cultural shift because it moves the team away from the comfort of a fixed daily schedule toward a living, breathing operational model. The primary trade-off is the initial investment in data integrity and the move away from “gut-feel” decision-making, but the reward is an environment where disruptions trigger automatic adjustments rather than organizational panic. We have seen that organizations that embrace this transition find their planners spend less time maintaining data and more time acting as strategic decision-makers who can steer the network through a storm.

Fuel logistics involve rigid constraints like compartment compatibility, driver certifications, and contamination rules. How does ignoring these granular details during the planning phase compromise execution, and how can organizations integrate these “real-world” variables into a core optimization engine?

In the world of fuel distribution, a plan that looks good on paper but ignores the reality of the truck or the terminal is worse than no plan at all. If a system suggests a route but fails to account for compartment constraints or the specific certifications a driver needs to handle a certain grade of fuel, the entire execution phase collapses at the loading rack. This lack of “constraint realism” is where many generic logistics tools fail, because they treat these rules as secondary checks rather than the foundation of the logic. To truly integrate these variables, they must be baked into the core optimization engine so that every generated route is inherently executable and compliant with labor and safety regulations. When product flows tighten, there is absolutely zero room to absorb planning errors, and a single mistake in contamination rules can lead to a catastrophic loss of product and reputation. By building a “digital twin” of these constraints, organizations ensure that even in the height of a crisis, the plans being pushed to the field are realistic, safe, and ready for immediate action.

Decision speed often depends on pre-evaluating “what-if” scenarios before a crisis hits. What specific variables, such as shifted arrival times or sudden sourcing changes, should be prioritized in these models, and how does having pre-tested decision paths fundamentally change a team’s response time?

Decision speed is essentially “bought” through the hard work of scenario planning long before the actual crisis arrives. The variables that demand the most attention are those that represent “single points of failure,” such as the closure of a major transit corridor, a sudden 10-fold jump in charter costs, or a terminal missing its critical unloading slot. When a team has already modeled what happens if they have to source product from a completely different origin or if a major depot loses 30% of its capacity, they are no longer analyzing the problem when it occurs; they are simply selecting a pre-validated solution. This fundamentally changes the team’s response time from days to minutes, as they are not starting from zero but are instead moving from one pre-tested decision path to another. It removes the emotional weight of a crisis because the team feels empowered by a playbook of options, allowing them to maintain service levels and protect margins while their competitors are still trying to figure out the extent of the damage.

Many organizations fear that upgrading logistics software requires a year-long commitment. If a production-ready system must be deployed in just four weeks, what does the week-by-week progression look like, and how do you move from a data challenge to full inventory visibility so quickly?

The fear of a 12-to-18-month implementation is valid, but modern deployment models like the one we use for the Fuel Supply Optimizer have turned that timeline on its head. In Week 1, we conduct a “Demo Challenge” where we take the client’s actual business rules and a sample of their real planning data to show them the system handling their specific constraints in real-time—it’s a proof of value, not a sales pitch. During Weeks 2 through 4, we transition from that validated concept to building the Minimum Viable Product (MVP), which involves moving data from messy Excel workflows into a structured environment and configuring the full set of constraints. By the end of Week 4, the system is live, providing full fuel inventory visibility, vessel planning connected to inventory, and distribution schedules that are validated against real-world rules. This isn’t a “sandbox” or a pilot project; it is a live operational capability that allows planners to generate real plans, enabling the organization to layer on further optimization while already reaping the benefits of a modernized system.

True resilience requires visibility tied directly to planning logic rather than just having more data. How do weak signals, like updated ETAs or shifting inventory positions, trigger automatic recalculations, and what metrics best define success in a modern, connected planning environment?

Data without logic is just noise, and in a high-stakes environment like fuel logistics, having more data can actually lead to paralysis if you don’t have a way to interpret it. True resilience is found when “weak signals”—a two-hour delay in a vessel’s ETA or a slight shift in a depot’s consumption rate—automatically trigger a recalculation of the downstream plan. Instead of a dispatcher having to manually check every ETA, the system identifies which delays actually impact service levels and flags them for action, or better yet, suggests a re-optimized route. Success in this connected environment is defined by metrics like “time-to-react,” the percentage of plans that are executable without manual intervention, and the stability of inventory levels despite external shocks. When you see an organization move away from reactive “manual firefighting” and toward strategic, controlled adjustment, that is the clearest sign that their planning logic is finally catching up to the speed of their data.

What is your forecast for fuel supply logistics?

I believe we are entering an era where the “efficient” supply chain is being permanently replaced by the “resilient” supply chain, where the ability to adapt to instability is more valuable than the ability to predict it. We will see a massive consolidation of planning tools, moving away from fragmented spreadsheets toward unified platforms that can simulate the entire network from the vessel to the final-mile delivery. My forecast is that “Decision Intelligence” will become the standard, where AI-driven engines won’t just present data, but will actively recommend the best trade-offs between cost, carbon footprint, and service reliability. Organizations that continue to rely on manual, batch-based processes will find themselves structurally unable to compete in a market where volatility is the only constant. Ultimately, the winners in the fuel sector will be those who treat their logistics planning as a core strategic asset—an agile, living system that can breathe with the market and turn every disruption into an opportunity for optimization.