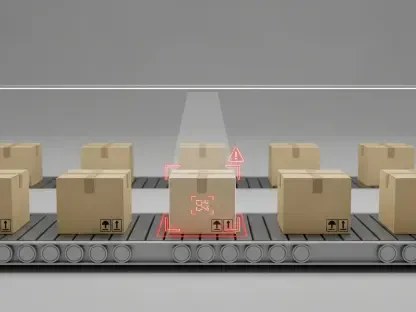

The historical reliance on static representations of human workers within industrial simulation environments has created a significant gap between theoretical facility throughput and the actual operational realities of the factory floor. For decades, the primary focus of digital modeling remained tethered to the “hard” elements of production, such as the physical dimensions of equipment, the speed of conveyor systems, and the logistics of automated machinery. While these models effectively predicted mechanical performance, they frequently overlooked the inherent variability of the human factor. Human operators do not move with the mathematical precision of a robotic arm; they experience fatigue, face ergonomic limitations, and navigate complex spatial environments that change in real time. This oversight often led to unforeseen bottlenecks and safety concerns during the transition from digital planning to physical execution. Reallusion has emerged as a disruptive force in this space by prioritizing the human element within the simulation stack.

The Technological Foundation: Modular Human Modeling

The shift toward a human-centric approach required a complete overhaul of how digital actors are animated and managed within a virtual industrial ecosystem. Rather than treating characters as secondary visual assets, the latest framework utilizes a modular architecture that bridges the gap between micro-level actions and macro-level system dynamics. At the most granular level, tools such as Motion Director allow for the precise control of individual characters using behavioral presets that are grounded in real-world physics. This means that when a worker is simulated pushing a heavy pallet jack, the software accounts for the specific body mechanics, pacing adjustments, and spatial requirements necessary for that specific task. By moving away from idealized, linear movements, planners can now account for the natural fluctuations in cycle times that occur during manual material handling. This granularity ensures that the digital twin of a facility is not just a visual representation but a functional operational model that reflects actual labor.

Building on these individual interactions, the simulation suite extends its capabilities to manage complex group behaviors through advanced crowd dynamics and environmental interaction. The integration of “Crowd Sim” functionality allows industrial engineers to visualize how large groups of operators move through high-traffic zones or navigate bottlenecks during shift changes. Furthermore, the process of setting up these environments has been streamlined through rapid prototyping tools like “BuildingGen,” which enables the generation of modular factory interiors from customizable blueprints. By automating the distribution of tools and accessories to hundreds of digital workers simultaneously, the platform eliminates the tedious manual configuration that previously hindered large-scale simulations. This automation allows engineering teams to focus their efforts on refining production logic and safety protocols rather than spending hundreds of hours on asset placement. The result is a highly responsive environment where every worker and tool interacts within a cohesive, data-driven system.

Advanced Logic: Orchestrating Motion and Real-World Data

The introduction of “Motion Planning” represents a fundamental evolution from traditional, scripted animation sequences to a logic-driven system that manages complex human behaviors. Utilizing an intuitive node-graph workflow, this technology acts as the central intelligence for the simulation, allowing users to link different operational zones and task sequences into a continuous flow. Instead of creating isolated “one-off” scenes, stakeholders can now build comprehensive behavioral systems where digital humans react dynamically to changes in their environment. This modularity is particularly valuable for conducting “before-and-after” studies of facility layouts. By adjusting the logic within the node graph, planners can immediately see how a change in the assembly line configuration impacts worker travel distances or handoff efficiency. This systemic approach provides measurable feedback that allows for the validation of process improvements before any physical changes are implemented on the factory floor, reducing the risk of costly errors.

To ensure that these logical models are supported by high-fidelity data, the ecosystem incorporates “Video Mocap” technology to bridge the physical and digital worlds. This innovation democratizes the process of motion capture by allowing companies to convert standard video footage of actual employees into editable 3D motion data. Historically, achieving this level of realism required expensive motion-capture suits, specialized cameras, and dedicated laboratory space. In the current landscape of 2026, a manager can capture a specific work sequence using a standard smartphone and instantly integrate that unique movement data into a simulation. This capability is crucial for capturing the nuances of specialized manual tasks that cannot be found in generic motion libraries. By using real-world performance data to drive digital twins, organizations can create highly credible validation models that accurately reflect the actual walk paths and cycle times of their specific workforce, leading to more reliable projections.

Industrial Integration: Ecosystem Scalability and Collaboration

A robust human-centric simulation is heavily dependent on the quality and availability of its digital assets, a challenge addressed through the expansive “ActorCore” ecosystem. This library provides a massive repository of simulation-ready digital humans, industry-specific props, and specialized motions that are optimized for real-time performance. By offering a standardized set of assets tailored for factory maintenance, warehouse operations, and public space management, the platform allows companies to bypass the time-consuming and expensive process of custom 3D modeling. This “plug-and-play” accessibility ensures that even smaller organizations can deploy sophisticated simulations without the need for a large team of specialized artists. The focus remains on the operational logic and the optimization of human-machine interaction, as the foundational visual and behavioral elements are already verified and ready for deployment within the simulation environment.

The versatility of this platform is further enhanced by its seamless integration into modern digital twin pipelines through the support of Universal Scene Description (USD) workflows. This interoperability is essential for large-scale enterprise projects where different departments—ranging from architectural planning to AI training—must collaborate within a unified digital space. By facilitating a smooth data exchange with platforms like NVIDIA Omniverse, Reallusion ensures that its human-centric models can be part of a larger, multi-disciplinary simulation effort. This connectivity has led to widespread adoption by global industrial leaders in the automotive and electronics sectors, including companies like BMW, Toyota, and Foxconn. These organizations utilized the platform to refine their plant layouts and optimize electronics manufacturing setups, proving that the integration of realistic human behavior is no longer a luxury but a strategic necessity for maintaining a competitive edge in modern production.

Operational Outcomes: Strategic Implementation and Future Insight

The transition toward validated, human-centric simulation has fundamentally altered how industrial projects are planned and executed across various global sectors. Organizations moved beyond simple visualizations and adopted a mindset of “validation-ready” modeling, where every manual task was tested for ergonomic safety and efficiency. This approach allowed automotive manufacturers to identify potential fatigue-related bottlenecks in assembly lines long before the start of production. Furthermore, the technology’s utility extended into the public sector, where it was implemented for safety planning and immersive training experiences for government agencies. By utilizing high-fidelity motion data and logic-driven behavior, these projects provided a realistic testing ground for emergency response and public flow management. The aggregation of these diverse applications demonstrated that the ability to model human variability accurately is the most critical component in any complex system simulation.

Moving forward, the focus for industrial planners shifted toward the continuous integration of real-time data to maintain the accuracy of their digital twins. Teams implemented decentralized data capture workflows, enabling on-site supervisors to update simulation models as work processes evolved on the shop floor. They prioritized the use of node-based motion planning to create flexible operational templates that could be rapidly redeployed across different facility locations. This strategic focus ensured that the human element remained a central pillar of the planning process, rather than a secondary consideration. The implementation of these tools provided a clear roadmap for organizations seeking to harmonize their workforce with increasingly automated environments. Ultimately, the successful deployment of these simulation strategies proved that the most effective way to optimize a production system was to treat the human operator as a dynamic, measurable, and essential part of the digital twin ecosystem.