Listen to the Article

The promise of AI in logistics often overshadows its most critical dependency: data. Companies invest millions in AI platforms to optimize routes, predict demand, and automate warehouses, only to see projects stall. The problem is rarely the algorithm. It is the fuel it runs on.

Raw operational data is noise. Actionable AI requires clean, structured, and meticulously labeled datasets that teach machine learning (ML) models the nuances of a complex supply chain. This process, known as data annotation, is the bridge between chaotic information and predictive insight. It is the often-overlooked foundation for building truly intelligent logistics networks.

Mastering this process is what separates companies struggling with AI pilots from those achieving what industry results now confirm: companies that use AI-driven inventory systems see a 35% reduction in inventory levels while achieving a 65% boost in service levels, and a 15% decrease in overall logistics costs.

Read this blog post to explore how data annotation (the often-overlooked engine behind AI) is transforming logistics from reactive guesswork into a precise, predictive, and principled operation.

From Raw Data to Real-Time Decisions

The tangible power of AI is unlocked through precisely labeled datasets that train ML models to understand complex operational scenarios. In warehouse automation, algorithms analyze annotated data, from historical purchase patterns and SKU-level velocity to market trends and weather forecasts, to predict product demand with remarkable accuracy. This shifts inventory from a static liability to a dynamic, demand-driven asset.

By maintaining optimal stock levels, companies prevent costly overstocking and eliminate missed sales from stockouts. Strategically positioning products closer to anticipated demand centers also significantly accelerates delivery times. This predictive capability, built on a foundation of well-annotated historical data, directly impacts storage costs and boosts customer satisfaction through faster fulfillment.

Beyond the warehouse, data annotation is instrumental in optimizing the movement of goods. AI-driven route optimization systems rely on annotated datasets that encompass traffic patterns, fuel costs, vehicle telematics, and driver performance metrics to calculate the most efficient delivery paths. This does more than reduce transportation costs; it enables dynamic rerouting to avoid disruptions before they escalate into costly delays.

Simultaneously, real-time shipment tracking has been revolutionized by AI-powered virtual assistants. Trained on annotated datasets of customer inquiries and logistics data, these systems handle a high volume of tracking requests instantly. This provides customers with the transparency they demand while freeing human agents to manage more complex exceptions, creating a faster, smoother experience powered by intelligent systems.

Unlocking Value in Unstructured Data

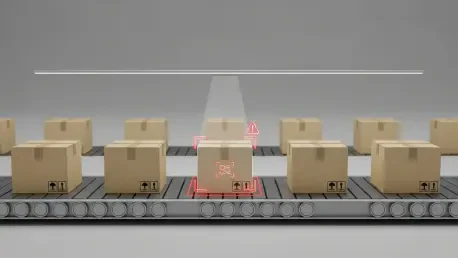

To push the boundaries of logistics AI, organizations are employing sophisticated data annotation techniques for unstructured data like images and video. Image annotation is crucial for training computer vision models to perform quality control and verification tasks throughout the supply chain.

By labeling images of damaged packages, identifying crushed boxes, leaks, or signs of tampering, AI systems can automatically flag issues for inspection. This streamlines claims processing and improves overall quality management. Intel’s own logistics team faced this exact challenge at scale, struggling with time-consuming, inconsistent manual inspections of damaged cartons across its global warehouses. By training a computer vision model on annotated images of damaged versus acceptable boxes, they deployed an AI inspection application across their sorting facilities. The custom-built computer vision application reduced box inspection time by 90%, delivering instant, objective damage assessments, automatically alerting stakeholders with images and product information within seconds, and accelerating the entire claim-filing process.

In last-mile delivery, image annotation enables automated proof-of-delivery verification. Models trained on images of parcels at doorsteps, with visible house numbers or recipients, can confirm successful deliveries. This significantly reduces disputes, minimizes failed delivery attempts, and enhances customer trust in the final stage of the logistics journey.

Integrating and annotating diverse external data sources provides AI models with a deeper contextual understanding. Video feeds from dashcams and CCTV cameras can be annotated to identify patterns related to loading delays or unsafe driving behaviors. Data from Internet of Things (IoT) sensors on smart shelves or in fleet tracking systems provides granular insights into inventory levels and vehicle status. When these varied data streams are organized through meticulous annotation, businesses can develop more robust predictive models that prevent costly bottlenecks and create a more resilient supply chain.

Navigating the Complexities of Annotation at Scale

While the benefits are clear, treating data annotation as a simple, low-level task is a strategic blunder. High-quality annotation at an enterprise scale presents significant operational challenges that can derail AI initiatives if not managed properly. The first hurdle is the sheer volume of data. A single autonomous delivery vehicle can generate terabytes of sensor and video data daily, all of which requires labeling to be useful.

The second challenge is maintaining quality and consistency. When multiple human annotators work on a large dataset, their subjective interpretations can introduce inconsistencies that corrupt the training data. Without clear guidelines, rigorous quality assurance, and standardized tools, these errors can teach an AI model the wrong lessons, leading to flawed predictions and poor operational performance. Gartner estimates that poor data quality costs organizations an average of $15 million per year, encompassing direct losses from wasted resources and missed opportunities, as well as indirect costs from reduced efficiency and flawed decision-making.

Finally, businesses must confront the cost-benefit calculation. High-quality annotation requires significant investment in skilled labor, technology platforms, and robust project management. Leaders must justify this upfront cost by clearly linking it to long-term business outcomes, such as reduced fuel consumption, higher inventory turnover, or improved customer retention. Framing annotation not as a cost center but as a strategic investment in a core business asset (the data itself) is essential for securing the necessary resources.

Human Expertise as a Force Multiplier

Machine learning excels at identifying patterns, but the nuanced knowledge of human experts remains indispensable. The Human-in-the-Loop (HITL) annotation methodology systematically embeds this expertise into AI models. The process begins with logistics professionals manually labeling baseline datasets, such as identifying anomalies in delivery routes or classifying damaged parcels from images.

This initial, curated dataset serves as the “ground truth” for training an ML model. This foundational step ensures the model’s learning is guided by deep domain knowledge, capturing operational complexities that an algorithm could not infer from raw data alone. The accuracy gains delivered by HITL systems in complex, high-stakes domains are well-documented: real-world implementations show dramatic accuracy gains, healthcare diagnostics jump from 92% to 99.5%, document processing improves from ~80% to 95%+, and fraud detection false positives drop by 50%, improvements that fully automated pipelines, without domain-informed human oversight, consistently fail to match.

Once trained, the model transitions into an assistive role. It pre-labels new logistics data, suggesting tags for optimal routes or flagging potential delays. Human annotators then review, correct, and refine these machine-generated labels, focusing their expertise on verifying accuracy and handling edge cases. This iterative feedback loop, where human corrections are fed back into the model for retraining, creates a powerful synergy. With each cycle, the AI becomes more adept at understanding operational nuances, allowing human experts to focus on the most complex data and drive continuous improvement.

The New Mandate for Intelligent Logistics

The journey toward an intelligent supply chain is not built on algorithms alone. It is constructed label by label, dataset by dataset. Viewing data annotation as a mere administrative task is a recipe for failure. Instead, it must be treated as a core strategic competency, essential for unlocking the true potential of AI in a competitive and unforgiving market. The quality of the insights an AI can deliver is a direct reflection of the quality of the data it was trained on.

As AI becomes more integrated into operations, its ethical application also comes to the forefront. Leading organizations are now programming AI not just for efficiency but for fairness and social responsibility. By annotating datasets with ethical considerations, such as prioritizing shipments of medical supplies to underserved areas or ensuring equitable driver workloads, companies can build logistics systems that are both profitable and principled. This evolution moves beyond optimizing for cost and speed to creating supply chains that are resilient, responsive, and aligned with broader human values.

For leaders steering their organizations through this transformation, the path forward requires a shift in mindset. Success hinges on recognizing the foundational role of data quality and investing accordingly. The companies that thrive will be those that build a robust data annotation engine fueled by technology and guided by human expertise. This means treating annotation as a core competency rather than a back-office task, integrating domain experts directly into the labeling process, and investing in rigorous quality control to ensure data consistency and accuracy. Ultimately, organizations must also measure the return on investment of annotation through clear business KPIs, ensuring that every labeling decision can be tied to meaningful operational outcomes.