Rohit Laila is a seasoned veteran in the logistics and supply chain sector, boasting decades of hands-on experience navigating the complexities of warehouse operations and delivery networks. Throughout his career, he has witnessed the evolution of technology from basic inventory tracking to the sophisticated, AI-driven ecosystems we see today. His passion for innovation is matched only by his practical understanding of the friction points that occur when legacy systems meet modern demands. Rohit provides a unique perspective on why many warehouse operators feel “stuck” in their digital partnerships and how the shift toward modular, cloud-native architectures is fundamentally changing the rules of the game.

The following discussion explores the lifecycle of warehouse management systems, from the messy reality of initial go-lives to the warning signs of a system that can no longer scale. We delve into the technical advantages of microservices, the integration of cutting-edge robotics, and the strategic questions leaders must ask to find a technology partner that actually grows with them.

Implementation often involves tense negotiations and compromises on edge cases just to hit a go-live date. What specific “cracks” in functionality typically emerge during these initial months, and how do these early patches impact long-term operational scalability? Please share a step-by-step example of a common workaround.

In those high-pressure weeks leading up to a midnight go-live, the “cracks” almost always appear in the nuances of exception handling, such as how the system processes a short-pick or a damaged return. Often, the core logic is sound, but the edge cases—those 5% of orders that don’t follow the happy path—get patched with manual overrides to ensure the trucks keep moving. These patches create a “technical debt” where your staff ends up performing five manual clicks or a paper-based verification for every exception, which feels manageable on day one but becomes a choke point as you scale. For example, a common workaround involves the system failing to handle a specific multi-pack barcode; the team might decide to have a supervisor manually “adjust in” the stock to a virtual bin and then “adjust out” the individual units. This three-step manual intervention becomes a permanent, invisible tax on your efficiency that prevents you from ever reaching the “lights-out” automation you originally promised the board.

When volumes grow or new automation is budgeted, existing systems can feel more like a constraint than a tool. What are the specific red flags that indicate a system is merely “tolerating” growth rather than supporting it? Please provide an anecdote regarding a difficult automation integration.

The clearest red flag is when a simple request for a new process flow turns into a three-month negotiation with your vendor about “core logic” changes. You know your system is merely tolerating you when every minor adjustment—like adding a new packing station or changing a wave release rule—requires a massive regression test because “everything touches everything else.” I recall a situation where a firm tried to integrate a new high-speed sorter into a monolithic WMS, and the system’s rigid architecture couldn’t handle the sub-second data pings required for real-time routing. We ended up in a cycle where the warehouse was forced to slow down the physical conveyor belts to match the “thinking speed” of the software, which is the ultimate sign that your technology is a cage rather than a catalyst.

Transitioning to a cloud-native, microservices-based architecture allows different capabilities to evolve independently. How does this structure change the way teams handle system updates compared to older, monolithic platforms? What metrics should a manager track to prove that these independent services are actually improving daily efficiency?

In the old world of monolithic platforms, an update was a terrifying “big bang” event that happened once a year and risked breaking the entire operation; with microservices, updates become a continuous, non-disruptive stream of refinements. You can update your “intelligent picking” module on a Tuesday morning without ever touching your “shipping” or “receiving” logic, meaning the warehouse never has to stop breathing. To prove this is working, managers should move away from tracking just “uptime” and start looking at “deployment frequency” and “mean time to recovery” for specific features. If you can deploy a new feature or fix a bug in 24 hours rather than six months, and your “cost per order” stays flat even as SKU complexity rises, you have definitive proof that your modular architecture is delivering real-world agility.

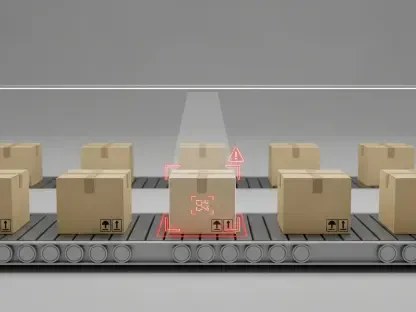

Integrating robotics, goods-to-person platforms, and AI can feel like a wholesale redesign in older systems. How do defined services simplify the connection to these emerging technologies, and what does a successful rollout look like? Please outline the specific stages of connecting a new shuttle or storage system.

Defined services act like a universal adapter, allowing you to plug in a new autonomous mobile robot (AMR) or a shuttle system without rewriting the DNA of your warehouse. A successful rollout using this method looks less like a “rebuild” and more like an “addition,” where the WMS speaks to the robotics control system through clean, standardized APIs. The stages involve first defining the specific “service” the robot will perform—such as a “bin-to-picker” movement—followed by a “sandbox” simulation where the microservice handles virtual data before the hardware arrives. Next, you run a “pilot loop” with a single shuttle aisle to ensure the handshake between the WMS and the hardware is seamless, and finally, you scale the service across the floor, knowing that if the robotics system needs an update, it won’t crash your inventory records.

Selecting a replacement WMS often involves more honesty about operational needs than the first time around. What hard questions should leaders ask vendors to ensure they aren’t just entering another defensive partnership? Provide a few examples of “must-have” conditions that distinguish a collaborative partner.

The second time you buy a WMS, you aren’t looking for a “feature list”; you are looking for a philosophy of flexibility. You must ask a vendor: “If I change my fulfillment strategy from B2B to D2C tomorrow, exactly how many lines of code need to change, and who owns that change—us or you?” A collaborative partner will offer “must-have” conditions like open API documentation as a standard, a roadmap that includes quarterly “capability drops” rather than multi-year overhauls, and a pricing model that doesn’t penalize you for adding new automation. You want a partner who says “yes, that’s possible” because their system was designed to be taken apart and put back together, not a vendor who treats every request as a breach of contract.

What is your forecast for the future of warehouse management systems?

I believe we are moving toward a “composable warehouse” era where the WMS as a single, heavy application effectively disappears. Instead, operations will be run by a collection of hyper-specialized “capability units”—one for AI-driven slotting, one for robotic orchestration, and one for labor management—that all communicate through a central cloud fabric. In the next five years, the most successful warehouses won’t be the ones with the “biggest” software, but the ones with the most “fluid” software that can adapt to a new delivery channel or a new robotic picker in days rather than months. We are heading toward a future where the software is as dynamic as the stock moving through the building, and the word “implementation” will be replaced by the word “evolution.”