Rohit Laila brings decades of leadership experience in the logistics and supply chain sector, having overseen complex delivery networks where precision is the only path to success. Throughout his career, he has navigated the intersection of traditional operations and cutting-edge technology, focusing on how innovation can mitigate systemic risks. In this conversation, we explore the evolving landscape of supply chain integrity, the integration of AI-driven visual inspection, and the critical need for robust governance to prevent cascading failures in an increasingly automated world.

Large organizations manage thousands of suppliers where a single failure can spiral into regulatory violations or brand damage. How do you assess the “hidden” costs of these disruptions, and what specific metrics should leaders track to catch these risks before they escalate into an enterprise-wide crisis?

The hidden costs of a supply chain failure often dwarf the immediate logistics expenses because they trigger a domino effect across the entire organization. When a core component fails, you aren’t just looking at a production delay; you are facing potential layoffs, massive cost overruns, and regulatory violations that can lead to billion-dollar fines. To catch these risks early, leaders must track more than just “on-time delivery” and instead monitor multi-tiered supplier performance and transparency across their billions of dollars in spend. We have seen how a single non-conforming part can lead to emergency landings and viral photos of debris in residential yards, which destroys investor confidence and brand trust in a matter of hours. By measuring the “quality gap” between supplier delivery and internal specifications through automated platforms, companies can identify where these latent crises are brewing.

Production work often happens outside normal processes in specialized remediation lines, where documentation gaps can lead to critical hardware errors. What are the specific vulnerabilities of these manual steps, and how can automated inspection tools bridge the gap in quality control when documentation fails?

Manual remediation lines, often called “traveler lines,” are notoriously vulnerable because they operate outside the standard, highly controlled production flow. The biggest risk is the “documentation blind spot,” where a technician might remove a critical component—like a door plug—to perform a fix but fail to record its reinstallation. Automated inspection tools bridge this gap by acting as a continuous, unbiased witness that doesn’t rely on a human remembering to fill out a form. For example, if an AI system is integrated into the workspace, it can confirm that all four required retention bolts are physically present and tightened before a unit moves to the next stage. This ensures that even if the paperwork is missing or incomplete, the physical reality of the product is verified against the digital standard.

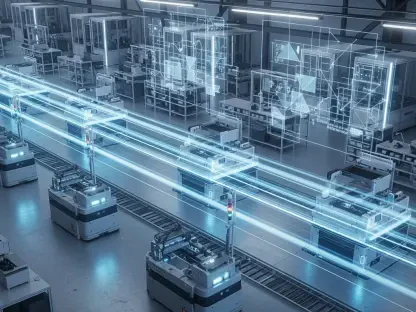

Visual data, including photos and video from the factory floor, is now being integrated into AI-driven inspection systems. Could you explain the step-by-step process of deploying these tools across a global supply chain and the challenges of ensuring consistent data quality from diverse, multi-tiered suppliers?

Deploying visual AI begins with establishing a baseline of “what good looks like” by feeding the system thousands of images of conforming and non-conforming parts. The next step is installing high-resolution cameras at critical nodes in the multi-tiered supplier network, allowing for real-time monitoring of assembly steps that were previously invisible to the parent company. The primary challenge is consistency; a supplier in one region might have different lighting or camera angles than another, which can confuse a sensitive AI. To overcome this, organizations must implement standardized work instructions and automated platforms that normalize data from these diverse sources. Once the data is consistent, the AI can flag a defect at a supplier’s facility long before that part is ever shipped to the main assembly line.

Supply chain cyberattacks are increasingly driven by autonomous AI agents that can target dozens of organizations simultaneously. What does a coordinated AI attack look like in practice, and what specific defensive protocols should a company implement to protect its internal chatbots and data systems?

A coordinated AI attack is terrifying because of its scale; instead of a single hacker targeting one firm, autonomous agents can launch sophisticated, simultaneous strikes against dozens of companies within a single supply chain ecosystem. We have seen demonstrations where internal chatbot systems are compromised in minutes, giving attackers a “backdoor” into proprietary data. To defend against this, companies must treat AI itself as a new entry point for risk and implement protocols that go beyond traditional firewalls. This includes the use of “adversarial testing” where your own security teams try to trick the AI, and the establishment of clear principles for responsible AI use that limit the chatbot’s access to sensitive production data. Given that 65% of organizations have already experienced a supply chain cyberattack, these protocols are no longer optional.

While AI offers speed and consistency, human oversight remains a requirement for high-stakes decision-making. How should a company structure its governance to balance automated review with human judgment, and what methods should audit teams use to test the reliability of these AI-driven controls?

Governance should be structured so that AI handles the high-volume “noise” of data monitoring, while humans are reserved for high-stakes, nuanced decisions that require ethical or strategic judgment. A company must establish a clear hierarchy where AI outputs are treated as recommendations that require verification by human experts before a product is cleared for public use. Audit and assurance teams should use “blind testing” methods, where they occasionally introduce known errors into the system to see if the AI catches them and if the human supervisor reacts appropriately. This rigorous testing ensures that leadership has real confidence in the controls and prevents “automation bias,” where employees stop questioning the machine.

What is your forecast for the future of AI in supply chain risk management?

I believe that within the next few years, AI will shift from being a competitive advantage to a mandatory regulatory requirement for global supply chains. As risks become more complex and attackers more sophisticated, the speed of human-only monitoring will simply be too slow to prevent catastrophic failures. We will see the rise of “autonomous compliance” where AI systems not only detect errors but automatically pause production lines or trigger supplier audits the moment a deviation is spotted. Organizations that fail to adopt these disciplined, AI-driven oversight models will likely find themselves uninsurable and unable to meet the safety expectations of the modern consumer.